How (not) to measure the efficacy of drugs

Posted by Henry Bauer on 2015/02/19

Innumerable books and articles have described the flaws of contemporary drug-based medicine, notably the way drugs are approved: the Food and Drug Administration requires only 2 successful trials of 6 months duration — even if there have been many unsuccessful trials as well. Accordingly, drugs have had to be withdrawn from the market because of their toxicity sooner and sooner after their initial approval (p. 238 ff. in Dogmatism in Science and Medicine, McFarland 2012). It is becoming quite common to see a drug being advertised by its manufacturers at the same time as a law firm is canvassing for patients harmed by the drug to join their class-action suit (today, for example, with Xarelto, approved in 2008 and for extended uses in 2011).

Not widely noted or understood is that the statistical criterion for efficacy of a drug is inappropriate. What concerns patients (and ought to concern doctors) is how big an effect a drug has; but the approval process only requires that it be better than placebo, or than a competing drug, at “statistical significance” of p≤ 0.05. The latter is already a very weak criterion, allowing the result to be wrong once in 20 trials. But even more inappropriate is that the effect size need not be large. If one uses a large enough number of guinea pigs, even a tiny difference can become “statistically significant”. For instance, clopidogrel (Plavix) is prescribed for prevention of stroke, and a study found it better at 75 mg/day, at statistical significance of p = 0.043, than aspirin at 325 mg/day. But it took nearly 20,000 trial subjects to reach this conclusion, because the reduction in risk of an adverse event was only from 5.83% (per year) to 5.32% *. One might judge this as trivial and not worth the extra cost and extra danger of side effects compared to aspirin, one of the safest drugs as demonstrated by decades of use.

Moreover, meaningful for patients is the change in absolute risk brought about by an intervention, not the relative reduction in risk compared to something else. The occurrence of an adverse (stroke) event is about 5% per year in older people; the absolute reduction brings it to perhaps 4.5%, about 1 in 22 instead of 1 in 20. Trivial, especially considering that such small differences, even from large trials, may actually be artefacts of some flaw or other in the trial protocol or practice.

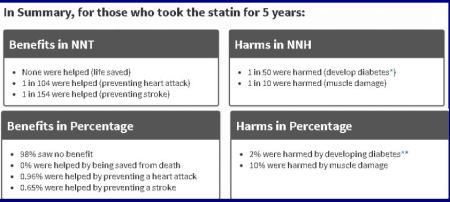

The easiest measure of efficacy to understand, but almost never shared with patients or doctors, is NNT: the number of patients that needs to be treated in order to achieve the desired result in 1 patient. These numbers reveal an aspect of drug treatment that is not much emphasized: no drug is 100% effective in every patient.

Even less commonly shared is NNH: the number of patients who must receive a drug in order to have 1 patients harmed by that drug. This reveals an aspect of drug treatment that is not at all emphasized, indeed deliberately avoided: every drug has adverse effects to some degree.

A fine exposition of this appeared in the New York Times: “How to measure a medical treatment’s potential for harm”: to prevent 1 heart attack over a 2-year period, 2000 patients need to be treated (NNT = 2000 — the benefit is 1 in 1000); but aspirin can also cause bleeding, NNH = 3333. So the chance of benefit — very small to start with — is only about twice the chance of harm. In other cases — mammograms are mentioned, and antibiotics to treat ear infections in children, NNH is large compared to NNT; yet current medical practice goes against this evidence.

More examples are given by Peter Elias.

Statins show up very badly indeed when evaluated in this manner:

For other critiques of using statins, see “STATINS are VERY BAD for you, especially FOR YOUR MUSCLES”; “Statins weaken muscles by design”; “Statins are very bad also for your brain”; “Statins: Scandalous new guidelines”.

——————————————————————

* Melody Ryan, Greta Combs, & Laroy P. Penix, “Preventing stroke in patients with Transient Ischemic Attacks”, American Family Physician, 60(1999) 2329-36

mo79uk said

I think the death knell for cholesterol is nearly audible now.

A week or so back a report was released in the UK (and echoed around the media) that questioned the basis for dietary guidelines, and the US may waver cholesterol as a heart risk: http://mobile.reuters.com/article/idUSKBN0LE2GQ20150210?irpc=932

To save face, I’m sure it’ll first be demoted as low risk and slip quietly off the radar to minimise lawsuits.

LikeLike

Henry Bauer said

mo79uk:

Yes. There is zero chance that there would be a straightforward acknowledgment of official error.

LikeLike

Vortex said

Dr. Bauer, I want to ask you a question.

Recently, I visited a web page which debated the problem of the excessive trust people put in the current consensus:

http://www.quora.com/When-it-so-often-fails-why-do-we-still-trust-consensus-in-science-so-deeply

I was shocked by the 1st and the 12th answers to the questions posed. In sum, these two responders, all rhetoric aside, claimed that non-scientists should never scrutinize or doubt dominant positions in science – at all. They should always trust and obey the current consensus without hesitation.

I find this position quite stupid. I think it denigrates most people, demanding them to give away their capacities of learning, thinking, doubting and criticising. It demands them to give away their intelligence, exept for one trait – memorizing. According to such view, all ordinary people should do is to memorize the consensus position and live by it obidiently, without trying – God forbid! – check and evaluate any alternative views.

Such position also idealizes science, describing it as a perfect self-correcting process, and undervalues contrarian views, many of which are well-founded… Oops, what am I doing?! I’m questioning the mainstream science and its consensus! I’m not a scientist, therefore I’m not allowed!

Dr. Bauer, what do you think about it? Should we laypeople be allowed to think – and talk – about science, especially if we honestly try to learn and analyze? Or should we be allowed only to repeat what experts think?

LikeLike

Henry Bauer said

Vortex/Basil/Jason:

Nicely put. As you know, I agree.

However, I don’t think Internet places like Quora or Wikipedia should be relied on either.

If you want to understand about science, read John Ziman’s Real Science and earlier books and my Scientific Literacy and Myth of the Scientific Method and Science or Pseudoscience: Magnetic Healing.

LikeLike

Anon said

Mainstream media has started pointing this out: http://www.economist.com/news/leaders/21588069-scientific-research-has-changed-world-now-it-needs-change-itself-how-science-goes-wrong

LikeLike